Reading Time: 11 minutes

Reading Time: 11 minutes

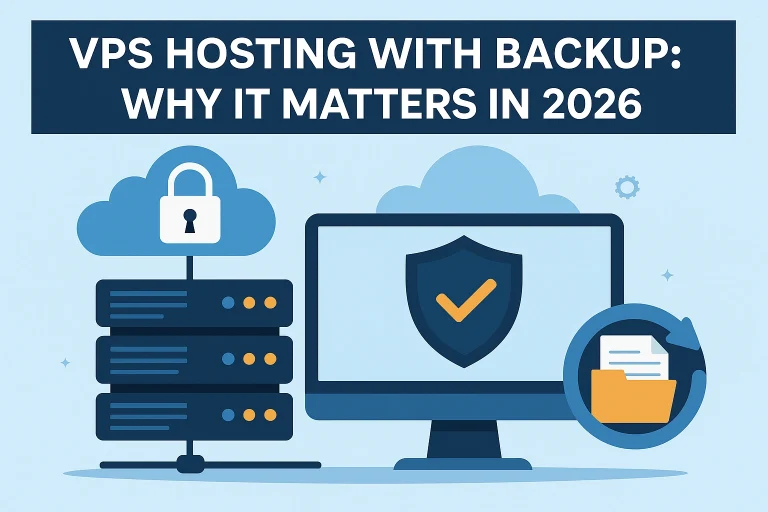

The website is hardly just the location of where customers find your brand. Your website is the engine that allows your business to run in real-time, creating engagement and an experience 24/7. As your homepage loads until checkout—the finish line of the customer's online experience—every step takes place only because of one thing: the dependability of your hosting. If you hit a server glitch, then everything else—sales, trust, and goodwill—will stop. As of 2026, smart brands no longer think of hosting as an afterthought. Now they are selecting better to be on a VPS hosting plan with an option for backup. A VPS hosting plan provides a fully functional platform that delivers speed, control, and persistence. VPS hosting is not simply to keep your site online. VPS hosting guarantees that your site withstands traffic, restarts quickly, and evolving upward safely. A surge of traffic should not strand you, a plugin failure should not replace you, and a security breach or exposure should not create any downtime. A fully secure VPS with an automatic backup service keeps your web presence safe, functional, and dynamic. Consider it more as business insurance than an upgrade – the very lifeblood of your brand during an era where any downtime should be consigned to absolute oblivion. Understanding VPS Hosting: Will Change What It Means What Does VPS Stand For? A Virtual Private Server, or VPS, offers the best of having a shared hosting and dedicated hosting situation. Think of a floor in a high-rise. The overall structure is shared, in this case, that is the physical server, but the apartment, or suite, in the building is all yours; you own the doors, you set the rules, and you pay for all the bills. The Web world reciprocates with a few advanced tools unto you. This includes an Exclusive CPU, RAM, storage, and bandwidth configured for an enviable peace in your own domain, never to share another's server. Each VPS runs on an operating system peculiar to it, maintaining its own personality and configurations. Thus, you are not in any way affected by the other person's activities on this server. Key Advantages of VPS Hosting: More...